Or how, in 2004, I liked the editing interface of a small Amsterdam cultural institution, and why I am still waiting for it to get copied.

In 2004 I encounter the website of the Amsterdam magazine/web platform/art organisation Mediamatic. The site is remarkable in several ways. Firstly, it shows off the potential of designing with native web technologies. Its layout is a re-appraisal of one of the core fonts available to almost all surfers: Georgia, and its Italic. The striking text-heavy layout uses this typeface for body-text, in unconventionally large headings and lead-ins. Secondly, the site opens up a whole new editing experience. In edit mode, the page looks essentially the same as on the public facing site, and as I change the title it remains all grand and Italic. I had been used to content management systems proposing me sad unstyled form-fields in a default browser style, decoupling the input of text completely from the final layout. That one can get away from the default browser style, and edit in the same style as the site itself, is nothing short of a revelation to me—even if desktop software has been showing this is possible for quite some time already.

In 2004, there are more websites with an editing experience like Mediamatic: Flickr, for example makes it possible to change the title and metadata of a photo right on the photo page itself, if one is logged in. Yet flash forward to 2014, and most Content Management Systems still offer us the same inhospitable form fields that look nothing like the page they will produce.

If we look at the experience of writing on Wordpress, the most used blogging platform, the first thing one notes is that the place where one edits the posts is quite distinct from the place that is visited by the reader: you are in the ‘back end’. There is some visual resemblance between the editing interface and the article: headings are bigger then body text, italics become italic. But the font does not necessarily correspond to the resulting posts, nor do the line-width, line-height and so forth. Some other elements are not visual at all: to embed youtube and the like one uses ‘shortcodes’.

Technologically, what was possible in 2004 should still be possible now—the web platform has since then only advanced, offering new functionality like contentEditable which allows one to easily make a part of a webpage editable, without much further scripting. So where are the content management systems that take advantage of these technologies? To answer this question, we will have to look at how web technologies come about.

The dominant computing paradigm and its counter-point

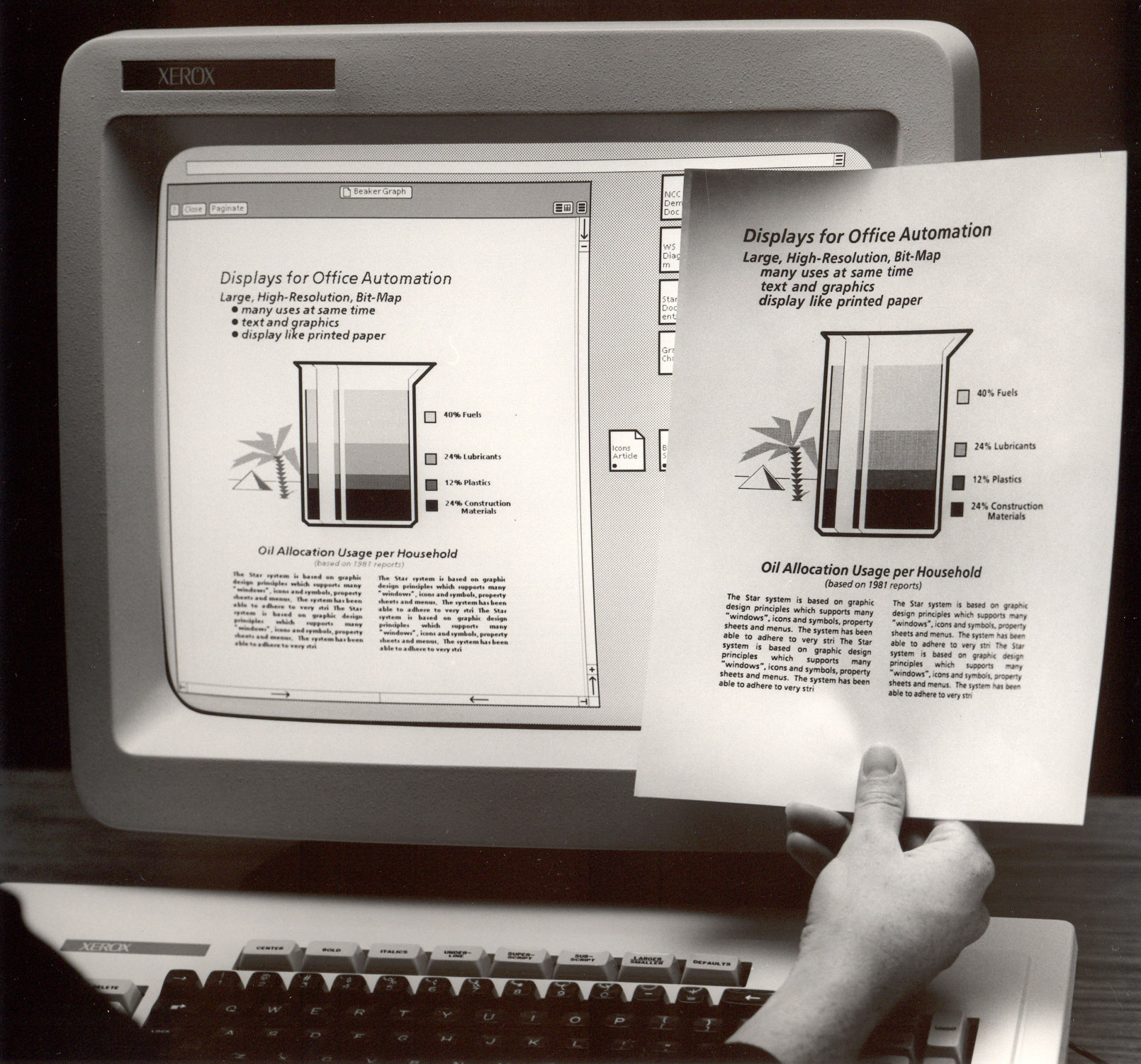

An editing interface that visually resembles it visual result is know as WYSIWYG, What You See Is What You Get. The term dates from the introduction of the graphical user interface. The Apple Macintosh offers the first mainstream WYSIWYG programs, and the Windows 3.1 and especially Windows 95 operating systems make this approach the dominant one.

A word processing program like Microsoft Word is a prototypical WYSIWYG interface: we edit in an interface that visually resembles as close as possible the result that comes out of the printer. Most graphic designers also work in WYSIWYG programs: this is the canvas based paradigm of programs like Illustrator, inDesign, Photoshop, Gimp, Scribus and Inkscape.

But being the dominant paradigm for user-interfaces, especially in document creation and graphic design, does not mean the WYSIWYG legacy is the only paradigm in use. Programmer and author Michael Lopp, also known as Rands, tries to convince us that ‘nerds’ use a computer in a different way. From his self-help guide for the nerd’s significant other, The Nerd Handbook:

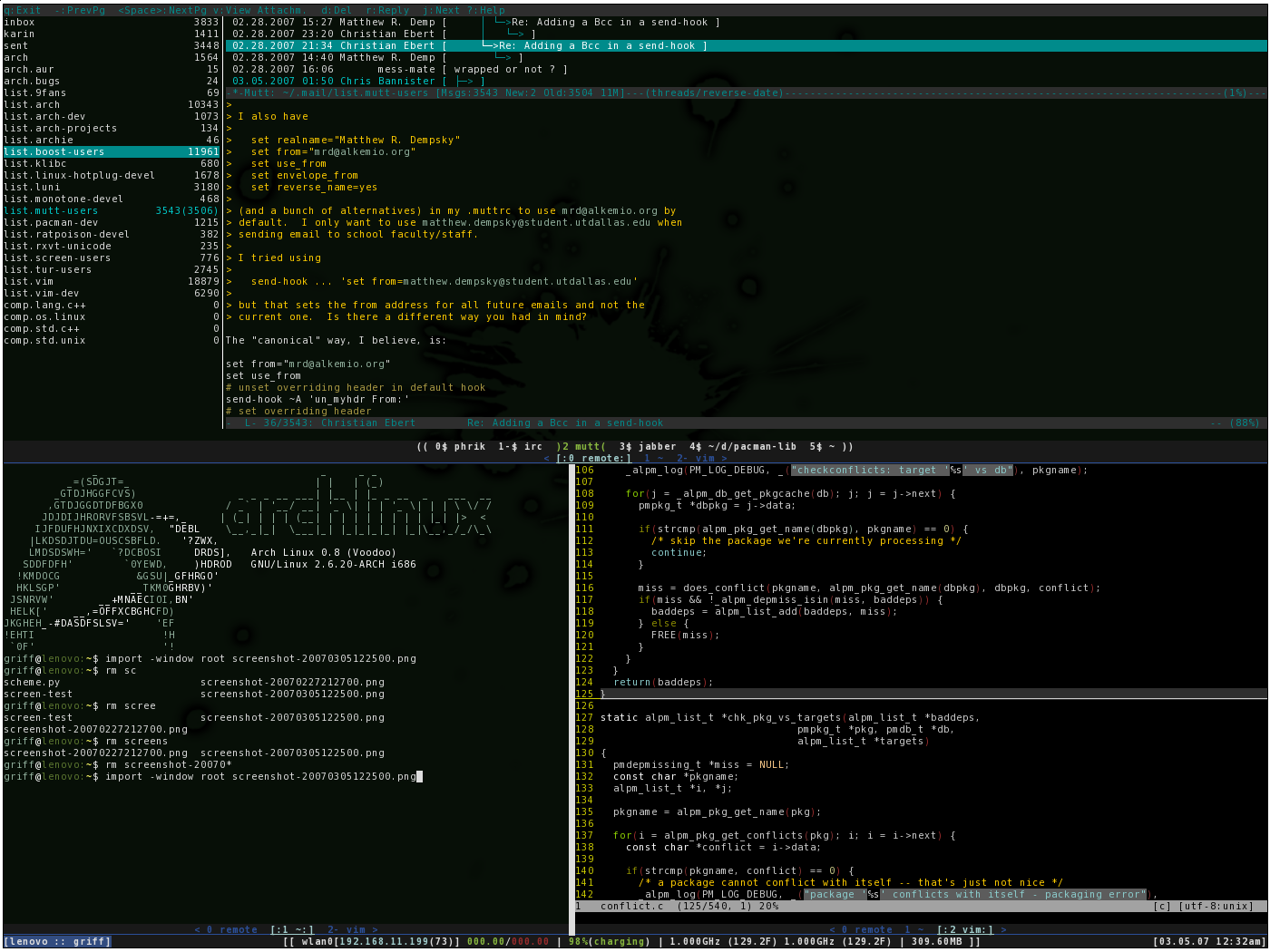

Whereas everyone else is traipsing around picking dazzling fonts to describe their world, your nerd has carefully selected a monospace typeface, which he avidly uses to manipulate the world deftly via a command line interface while the rest fumble around with a mouse.

Rand introduces a hypothetical nerd that uses a text based terminal interface to interact with her computer. He mentions the ‘command line’, the kind of computer interface that sees one typing in commands and which is introduced in I like tight pants and absolute beginners: Unix for Art students.

Yet who exactly is it who likes to use their computer in such as way? ‘Nerd’ is a terribly imprecise term: one can be a nerd at many things, and it is mainly a derogative term. But it seems safe to suggest that those using the command line have some familiarity with text as an interface, and with using programming codes. People that are steeped in or attracted by, the practice of programming.

Programmers as Gatekeepers

Since the 1990ies desktop publishing revolution Graphic Designers have been able to implement their own print designs without the intervention of engineers. In most cases this is not true for the web: the implementation of websites is ultimately done by programmers. These programmers often have an important say in the technology that is used to create a website. It is only normal that the programmers’ values and preferences are reflected in these choices.

This effect is reinforced because the programming community largely owns its own means of production. In contrast with print design, the programming technologies used in creating web sites (the programming languages, the libraries, the content management systems) are almost always Free Software and/or Open Source. Even commercial Content Management Systems are often built upon existing Open Source components. There are many ways in which this is both inspiring and practical. Yet if this engagement with a collectively owned and community-driven set of tools is commendable, it has one important downside: the values of the community directly impact the character of the tools available.

Hacker Culture

Programming is not just an activity, it is embedded in a culture. All the meta-discourse surrounding programming attributes to this culture. A particularly influential strand of computing meta-discourse is what can be called ‘Hacker Culture’. If I were to characterise this culture, I would do so by sketching two highly visible programmers that are quite different in their practice, yet share a set of common cultural references in which the concept of a ‘hacker’ is important.

On the one hand we can look at Richard Stallman, a founder of the Free Software Movement, tireless activist for ‘Software Freedom’. Having coded essential elements of what was to become GNU/Linux, he is just as well known for his foundational texts such as the GPL license. The concept of a hacker is important to him, as evidenced in his article ‘On Hacking’.

On the other hand there is someone like Paul Graham, a Silicon Valley millionaire and venture capitalist. Influential in ‘start-up’ culture, Graham has turned his own experience into something of a template for start-ups to follow: start with a small group of twenty-something programmers/entrepreneurs and create a company that tries to grow as quickly as possible, attract funding, and then either fail, be bought, or in extremely rare cases become a large publicly traded company. His vision of the start-up is both codified in writing and brought into practice at the ‘incubator’ Y Combinator.

As different as Graham’s trajectory might be from Stallman’s, he too has written an article on what it means to be a hacker. The popular discussion forum he has run is called Hacker News. In fact, Graham refers to the people that start start-ups as hackers.

The fact that Stallman and Graham share a certain culture is shown by the fact that their conceptions of what is a hacker is far removed from the everyday usage of the world. While to most people a hacker means someone who breaks into computer systems, Stallman and Graham agree that true sense of hacker is quite different.

Thus, contesting the mainstream concept of hacker is itself important in the subculture: Douglas Thomas already describes this mechanism in his thoroughly readable introduction on Hacker Culture (2002). A detailed anthropological analysis of a slice of Hacker Culture is performed in Gabriella Coleman’s Coding Freedom: the Ethics and Aesthetics of Hacking (2012), though it seems to focus on Free Software developers of the most idealistic persuasion and seems less interested in the major role Silicon Valley dollars play in fuelling Hacker Culture. For this tension too is at the heart of hacker culture: even if Hacker Culture is a place to push new conceptions of technology, ownership and collaboration, the Hacker revolution is financed by working ‘for the man’. The Hacker Culture blossoming at universities in the 1960ies was only possible only through liberal funding through the department of Defense, today many leading Free and Open Software developers work at Google.

Grown (Wo)men Afraid of Mice

If we want to know about Hacker’s Culture’s attitude towards user interfaces, we can start to look for anecdotal evidence. In an interview about his computing habits, arch-hacker Stallman actually seems to resemble quite closely that of the hypothetical GUI-eschewing ‘nerd’ from Rands’ article:

I spend most of my time using Emacs [A text-editor]. I run it on a text console [A terminal], so that I don’t have to worry about accidentally touching the mouse-pad and moving the pointer, which would be a nuisance. I read and send mail with Emacs (mail is what I do most of the time).

I switch to the X console [A graphical user interface] when I need to do something graphical, such as look at an image or a PDF file.

Richard Stallman does not even use a mouse. This might seem an outlier position, yet he is not the only hacker to take such a position. Otherwise, there would be no audience for the open source window manager called ‘ratpoison’. This software allows one to control the computer without any use of the mouse, killing it metaphorically.

The mouse is invented in the early sixties by Douglas Engelbart. It is incorporated into the Xerox Star system that goes on to inspire the Macintosh computer. Steve Jobs commissions Dean Hovey to come up with a design that is cheap to produce, more simple and more reliable than Xerox’s version. After the mouse is introduced with the Macintosh computer in 1984 it quickly spreads to PC’s, and it becomes indispensable to every day users once the Windows OS becomes mainstream in the 1990ies.

The mouse is part of the paradigm of these graphical user interfaces, just like the WYSIWYG interaction model. The ascendence of these interaction models is linked to (and has probably enabled) personal computers becoming ubiquitous in the 1990ies. It is not this tradition that Stallman and likeminded spirits inscribe themselves in. They prefer to refer to the roots of the Hacker paradigm of computing that stretch back further: back when computers where not yet personal, and when they ran an operating system called ‘Unix’.

Unix, Hacker Culture’s Gilgamesh epic

The Unix operating system plays a particular role in the system of cultural values that make up programming culture. Developed in the 1970ies at AT&T, it becomes the dominant operating system of the mainframe era of computing. In this setup, one large computer runs the main software, and various users login into this central computer from their own terminal. This terminal is an interface that allows one to send commands and view the results—the actual computation being performed on the mainframe. Variants of Unix become widely used in the world of the enterprise and in academia.

The very first interface to the mainframe computers is the teletype: an electronic typewriter that allowed one to type commands to the computer, and to subsequently print the response. As teletypes get replaced by computer terminals, with CRT displays and terminals, interfaces often stay decidedly minimal. It is much cheaper to use text characters to create interfaces than to have full blown graphical user interfaces, especially as the state of the interface has to be sent over the wire from the mainframe to the terminal. Everyone who has worked in a large organisation in the 1980ies or 1990ies will remember the keyboard driven user interfaces of the time.

This vision of computing is profoundly disrupted by the success of the personal computer. Bill Gates vision of ‘a personal computer in each home’ becomes a reality in the 1990ies. A personal computer is self-sufficient, storing its data on its own hard-drive, performing its own calculations. The PC is not hindered by having to make roundtrips to the mainframe continuously, and as processing speed increases PC’s replace text-based input with sophisticated graphical user interfaces. During the dominance of Windows operating system, for most mainstream computer users Unix seems to become a relic: after conquering the homes, Windows computers conquer the workplace as well. In 1994’s Jurassic Park, when the computer-savvy girl needs to circumvent computer security to restore the power, she is surprised to find out that it’s a Unix system.

The tables turn when in 2000 Apples new OS X operating system uses Unix. At the same time, silently but surely the Linux operating system has been building mind share. A cornerstone of the movement for Free and Open Source software, Linux is a Unix clone that is free for everyone to use, distribute, study and modify. Even if both these unixes are built on the same technology as the UNIX that powers mainframe computers, these newer versions of UNIX are used in a completely different context. Linux and OS X are designed to run on personal computers, and both come with an (optional) Graphical User Interface, making them accessible to users that have grown up on Windows and Mac OS. All of a sudden, a new generation gets to appropriate Unix. A generation which has never had to actually use a Unix system at work.

Alan Kay claims that the culture of programming is forgetful. It is true that a new generation of programmers completely forgets the rejection of UNIX by consumers just years before, let alone wonder on the reasons for its demise. Yet the cultural knowledge embodied in Unix is now part of a community. The way in which Unix is used today might be completely different from the 1970ies, but Unix itself and the values it embodies has become something that unites different generations self-identifying with ‘hacker culture’.

The cultural depth of Unix far exceeds naming conventions. Unix has been described as “our Gilgamesh epic” (Stephenson 1999), and its status is that of a living, adored, and complex artifact. Its epic nature is an outgrowth of its morphing flavors, always under development, that nevertheless adhere to a set of well-articulated standards and protocols: flexibility, design simplicity, clean interfaces, openness, communicability, transparency, and efficiency (Gancarz 1995; Stephenson 1999). “Unix is known, loved, understood by so many hackers,” explains sci-fi writer Neal Stephenson (1999, 69), also a fan, “that it can be re-created from scratch whenever someone needs it.”

p.51 Coleman, Coding Freedom - The Ethics and Aesthetics of Hacking. New Jersey, 2012.

The primacy of plain-text

If there is a lingua franca in Unix, it is ‘plain text’. Unix originated in the epoch that users would type in commands on a tele-type machine, and typing commands is still considered an essential part of using Unix-like systems today. Many of the core UNIX commands are launched with text commands, and their output is often in the form of text. This is as true for classic UNIX programs as for programs written today. Unix programs are constructed so that the output of one program can be fed into the input of another program: this ability to chain commands in ‘pipes’ depends on the fact that all these programs share the same format of out- and input, which is streams of text.

The most central program in the life of a practitioner of Hacker Culture is the text editor. Contrary to a program like Word, a text editor shows the raw text of a file including any formatting commands. This is still the main paradigm for how programmers work on a project: as a bunch of text files organised in folders. This is not inherent to programming (there have been programming environments that store code in a database, or in binary files), but has proved the most lasting and popular way to do so. Unix’ tools are built around and suited for plain text files, so this approach also contributes to the ongoing popularity of Unix—and vice versa.

While programming, one has to learn how to create a mental model of the object programmed. As the programmer only sees the codes, she or he has to imagine the final result while editing—then compile and run the project to see if projection was correct. This feedback loop is much slower than the feedback loop as we know it from WYSIWYG programs. Maybe it is the experience of slow feedback that gives programmers more tolerance for abstract interfaces then those of us outside this culture.

While WYSIWYG has a shorter feed-back loop, it also adds additional complexity. Anyone who has used Microsoft Word knows the scenario: after applying several layers of formatting, the document’s behaviour seems to become erratic: remove a carriage return, and the whole layout of a subsequent paragraph might break. This is because the underlying structure of the rich text document (on the web, this is HTML) remains opaque to the user. With increased ease-of-use, comes a number of edge cases and a loss of control over the underlying structure.

This is a trade-off someone steeped in Hacker Culture might not be willing to make. She or he would rather have an understandable, formal system by which the HTML codes are produced—even if that means editing in an environment not resembling at all the final web page—because they already know how to work this way from their experience in programming.

This is shown by the popularity of a workflow and type of tool that is known as the ‘static site generator’. In this case, the workflow for creating a website is to have a series fo plain text files. Some of them represent templates, others content. After a change, the programmer runs the ‘static site generator’ and all the content is pushed through the templates to produce a series of HTML files. The content itself is often written in a code language like ‘Markdown’, that allows one to add some formatting information through type-writer like conventions: *stars* becomes stars.

Hacker Culture’s bias is holding back interface design

Because programmers are gatekeepers to web technology, and because programmers are influenced by Hacker Culture, the biases’ of Hacker Culture have an impact outside of this subculture. The world of programming is responsible for its own tools, and contemporary web-sites are built by programmers upon Open Source libraries developed by other programmers. Shaped by the culture of Unix and plain-text, and by the practice of programming, WYSIWYG interfaces are not interesting to most Open Source developers. Following the mantra to ‘scratch one’s own itch’, developers work on the interfaces that interest them. There are scores of the aforementioned ‘static site generators’: 242 of them, on last count.

Comparatively, the offer of WYSIWYG libraries is meagre. Even if HTML5’s ContentEditable property has been around for ages, it is not used all that often; consequently there are still quite some implementation differences between the browsers. The lack of interest in WYSIWYG editors means the interfaces are going to be comparatively flakey, which in turn confirms programmers looking for an editing solution in their suspicions that WYSIWYG is not a viable solution. There are only two editor widgets based on ContentEditable that I know of: Aloha and hallo.js. Aloha is badly documented and not easy to wrap your head around as it is quite a lot of code. Hallo.js sets out to be more lightweight, but for now is a bit too light: it lacks basic features like inserting links and images.

The problem with the culture of plain-text is not plain-text as a format. It is plain text as an interface. Michael Murtaugh has written a thoughtful piece on this in the context of The Institute for Network Cultures’ Independent Publishing Toolkit: Mark me up, mark me down!. Working with a static site generator, it becomes clear they are envisioned as a one way street: you change the source files, the final (visual) result changes. There is no way in which a change in the generated page, can be fed back into the source. Similarly, the Markdown format is designed to input by a text-editor, and than programmatically turned into HTML. Whereas HTML allows for multiple kinds of interfaces (either more visual or more text oriented), a programmer-driven choice for Markdown forces the Unix love of editing plain text onto everybody.

If WYSIWYG would be less of a taboo in Hacker Culture, we could also see interesting solutions that cross the divide code/WYSIWYG. A great, basic example is the ‘reveal codes’ function of WordPerfect, the most popular word processor before the ascendency of Microsoft Word. When running into a formatting problem, using ‘reveal codes’ shows an alternative view of the document, highlighting the structure by which the formatting instructions have been applied—not unlike the ‘DOM inspector’ in today’s browsers.

More radical examples of interfaces that combine the immediacy of manipulating a canvas with the potential of code can be found in Desktop software. The 3D editing program Blender has a tight integration between a visual interface and a code interface. All the actions performed in the interface are logged in programming code, so that one can easily base scripts on actions performed in the GUI. Selecting an element will also show its position in the object model, for easy scripting access.

HTML is flexible enough so that one can edit it with a text editor, but one can also create a graphical editor that works with HTML. Through the JavaScript language, a web interface has complete dynamic access to the page’s HTML elements. This makes it possible to imagine all kinds of interfaces that go beyond the paradigms we know from Microsoft Word on the one hand and code editors on the other. This potential comes at the expense of succinctness: to be flexible enough to work under multiple circumstances, HTML has to be quite verbose. Even if the HTML5 standard has already added some modifications to make it more sparse, for adepts of Hacker Culture it is not succinct enough: hence solutions like Markdown. However, to build a workflow around such a sparse plain-text format, is to negate that different people might want to interact with the content in a different way. The interface that is appropriate to a writer, might not be the interface that is appropriate to an editor, or to a designer.

Conclusion

The interfaces we use on the web are strongly influenced by the values of the programmers that make them, who reject the mainstream WYSIWYG paradigm. Yet What you see is what you get is not going anywhere soon. It is what made the Desktop computer possible, and for tasks such as document production, it is the computing reality for millions of users. Rather than posing a rejection, there is ample space to reinvent what WYSIWYG means, especially in the context of the web, and to find ways to combine it with the interface models that come from the traditions of Unix and Hacker Culture. Here’s to hoping that a new generation of developers will be able to go beyond the fetish for plain text, and help to invent exciting new ways of creating visual content.

The publication of the article coincides with the conference ‘Off the Press’, organised in Rotterdam by the Institute of Network Cultures as part of the Digital Publishing Toolkit.

The digital publishing toolkit is a project that tries to come up with tools and best practices for independent electronic publishing in the field of art. This means coming up with workflows that allow different professionals to add their value to the process: writers, editors, designers, developers (these categories may overlap).

As explained in this article, I like tight pants would advise the creators of the toolkit against interfaces too strongly biased towards programmer values but urge them to instead find solutions that allow multiple kinds of interfaces to the source—in short, using a plain text format like Markdown should not be forced upon all contributors.

Luckily, the trend has shifted recently in the last year or so.

This is exactly the problem the Guardian has trying to solve with its recently released Scribe library: “What we needed was a library that only patched browser inconsistencies in contentEditable and, on top of that, ensured semantic markup — a very thin layer on top of contentEditable. That’s why we built Scribe.”

The Guardian’s blog post does a good job of listing other alternatives. The venerable CKEditor provides inline editing, based on ContentEditable, in its latest version: http://docs.ckeditor.com/#!/guide/dev_inline Between CKEditor with its huge feature set, and Scribe, which could be the minimalistic starting point for your own editor, you should be able to find a package that works for your use case.

Reply

Welcome

Reply

Makes me think of Dave Egger’s resuscitation of Garamond for McSweeney’s. Says Ellen Lupton:

Thinking with Type 2002 p. 44

McSweeney’s 9, Magazine cover, 2002. Designer and editor: Dave Eggers

Reply

It’s nice that over the course of a decade they managed to keep the design in the same visual spirit. Though instead of refining it in it, they seem to have regressed slightly. For instance, one can see on archive.org that in 2003 the design was fully fluid, where now it artificially limited to 960 pixels in width, which makes these bars on the top look awkward.

Also, the editing view you loved so deeply is still there, but only to edit existing articles—when you make a new article you have your deeply maligned form views:

And what they were thinking when they added this progress-o-meter is beyond me:

Reply

As a programmer, what I find more worrying is that after all this time, being a primarily government funded organisation and making money creating websites for other government funded organisations, they never Open Sourced their content management system anyMeta.

Reply

Very interesting bit of polemic.

First, I don't think it's necessary to put GUI and text in opposition. They can both be useful and pleasant in certain contexts. GUI is great when you have a "spontaneous interface" where the user may not have the time or knowledge required to learn how to use it. More expert interfaces require more possibilities for expression than just clicking or tapping at buttons and menus. I don't need emacs on the ticket machine in the subway and I don't want my nuclear reactor to be controlled by a simplistic iPad app.

Some of the text-based interfaces you cite are popular not just because of their hardcore-nerdy street cred but because they use rule-based systems to automate much of their behavior. Ratpoison is a tiling window manager which means windows are arranged in a rule-based grid (hello swiss grid design). If you open a bunch of new windows they will fall into position relative to each other. The keyboard is mostly used to make small adjustments and do things that many power users do with the keyboard even in mainstream floating window managers (i.e. Alt-Tab to switch window focus). This can be a lot tidier and focused when one has a lot of things open.

Since you seem to mostly be talking about text editing systems, I would argue that one big problem with WYSIWYG is that it is based on the idea that the keyboard should only be used for linear text input and small localized editing and that all other operations should be done with the mouse. The mouse is privileged but it's not very expressive. Traditionally, this means you need to have a lot of buttons or menus all over the place which take up space and encourage particular actions. This use of space also means that the GUI has a powerful influence on your perception by use of colors, iconography and default typographic styles. This stands in pretty stark opposition to the blank slate interface of the text-based editor, which leaves you alone to confront your insecurities about the quality of the text you just wrote instead of fiddling around with the fonts.

I was talking to a filmmaker friend recently who still uses windows, despite the fact that most film people have long since switched to the Mac. When I asked him if he didn't get frustrated with Windows, he replied that he got frustrated all the time. Paraphrasing: "it's a crappy unreliable system but I've been using it for years so I usually know what will go wrong and how to cope with it". So I think the enduring popularity of WYSIWYG has something to do with the fact that many people would rather stick to the crappy systems they know, than switch to potentially more powerful ones they don't. And I can respect that*.

To conclude, I agree that there should be more UI with inline editing.** It's way more direct and intuitive. But text-based power-user interfaces have their place too. They are also important as a point of resistance for those of us who feel left out by the mainstream, corporate-dominated GUI culture.

* For more - see Olia Lialina's Turing Complete User.

** The oldest and for me, still best example is Tillswiki.

by Brendan - May 23, 2014 5:34 PM

Reply

Not exactly. The ContentEditable property has indeed been around for ages, first implemented in IE 5.5, then partially reverse-engineered by other browser vendors, but was only specified relatively recently (by the WHAT-WG).

On the subject of WYSIWYG editing in HTML, Nick Santos wrote an interesting low-level analysis on how ContentEditable, DOM Ranges and Selections, as currently specified, simply cannot provide a reliable editing experience. For a given input, and a given set of commands, the output can vary from one implementation to another. Therefore the promise of WYSIWYG (a two way mapping of display and structure) is broken.

https://medium.com/medium-eng/122d8a40e480

I very much share your desire to see more WYSIWYG and direct manipulation on the web. But it's my understanding that it can't be implemented with ContentEditable. All recent good editors (the one in Google Docs, or CodeMirror, ACE, etc) have dropped ContentEditable, and have re-implemented Ranges, Selections, etc from skratch (see http://codemirror.net/doc/internals.html ).

by Ned Baldessin - May 27, 2014 10:15 AM

Reply

Dear Ned, thanks for your reaction, and the link to the medium article: it is really insightful.

ContentEditable’s redeeming quality is that it works together with the DOM, the object the browser already uses to represent the page. The browser knows how to serialise the DOM to HTML, and the other way around. We have had some really bad experiences, for example, with Etherpad’s WYSIWYG editor. It is implemented in ACE, and uses character ranges to apply formatting. This breaks in unforeseeable ways, and because there is no serialisation format, we can not easily back-up or copy the information on the page. With the Aloha Editor, if something is going wrong, one can at least use the browser’s ‘inspect element’ function to see the structure produced, and to edit this way if necessary.

Google’s article on how they created the writing surface for Google Docs is great reading. And they don’t just reinvent contentEditable: they also reinvent they entire layout mechanism of the browser. For anyone not having Google’s resources, though, I wonder if this is a feasible approach. Another problem is that it works great for Google Docs, which is a monolithic application, but when providing ‘in-line editing’ in a CMS, for instance, the solution is going to have to be able to integrate with the existing lay-out model of HTML and CSS.

I do believe you when you say contentEditable in its current form has inherent problems. From this perspective, the Guardian’s Scribe project, is that just lipstick on the proverbial pig? Also, in Nick Santos’ article, he mentions:

I’m not sure exactly what that means (I have yet to wrap my head around the concept of the Shadow DOM), but might this be a fruitful direction? I have already run into the Shadow DOM recently when looking into DOM-diffing for another open problem… how to synchronise several contentEditable widgets across users in real-time!

Reply

I think you make many interesting points, and agree with your overall wish that WYSIWYG worked better for building the web.

However blaming the Unix/’Hacker Culture’ and a ‘text fetish’ is, in my view wide of the mark. In my experience, there are more dominant factors driving the poor state of WYSIWYG as a web-editing paradigm:

Semantic web movement - web-pages as ‘information’ need to be search-able, discover-able, linked. WYSIWYG editing (as it now stands) can often be in conflict with this goal.

The rise of CSS - Used by website developers to separate content from presentation, but difficult to integrate with WYSIWYG. —- The rise of CSS seems to have co-coincided with the ‘fall’ of Dreamweaver

PDFs (mainly created via WYSIWYG inc. Word). It seems no one is reading them on the web. (Because of semantics, search-ability, discover-ability, inability to easily extract information etc. etc). PDFs could be seen as the failure of un-controlled WYSIWYG workflow.

Much web content originates from databases, and that information originates from a variety of sources. So WYSIWIG is relatively less important than before.

Basic WYSIWYG editing in CMSs like Wordpress is adequate for short articles. And users love Wordpress.

Webpage update(ability), security and maintainability are also key factors in driving towards more text-based entry.

The web moves fast - e.g. increasing use of CSS, new HTML5 elements, and Javascript for interactivity. It is complex and difficult for WYSIWIG to keep up.

The link with Macs UNIX underpinnings, web development on Linux and WYSIWYG usage is tenuous. Most website developers use Macs (very GUI); some Windows.

The command line is not just a Unix thing. People working with Windows at a deeper level (programmers, engineers) - just as likely end up using scripts for automating and the cmd line for particular tasks.

Think of Excel as one of the most successful applications ever. Unlike Word, the data is structured. A text field is the primary data entry point. Excel ‘culture’ is pervasive. A lot of web- content originates in Excel.

Similarly workflows for creating print publications and PDFs = The authors write in Word. Authors e-mail the lightly formatted Word file to the DTP specialist. The DTP specialist does all the layout, graphics and styling (InDesign). (plus revise and repeat)

Regarding the last two points, your article might better be titled: ‘Excel culture’ and fear of WYSIWYG. ‘Graphic Design/DTP culture’ and the fear of WYSIWYG.

I think your article would be closer to reality with just one paragraph mentioning the Unix/Hacker culture’s focus on text (it is certainly a factor - but the article really goes overboard)

There are some recent interesting developments such as Drupal 8’s stated goal to embrace in-place WYSIWYG: http://buytaert.net/from-aloha-to-ckeditor

by Tony - May 27, 2014 2:15 PM

Reply

As someone who's written a moderately complex CMS from scratch and maintained it for 10 years, thanks for saving me a lot of typing by covering what I wanted to say and more.

Basically the tendency in CMS to prefer to store content in as simple a format as possible, to my eyes, largely comes down to two things. First, HTML is a mess. Browsers will attempt to parse it no matter how bad it is and WYSIWYG editing of HTML generates bad and/or broken HTML, no one has solved that yet. Formatted text from Word in particular (which arrives as HTML when pasted) is a complete disaster and has to be stripped bare before making its way into a page or it will destroy your page layout and formatting.

The second, and more fundamental, issue is that that content may be for a webpage now, but it's also going to be in an RSS feed, maybe a mobile app, which might not be rendering text as HTML, once it might have gone to a Flash app and in 5 years who knows what system it might have to be displayed in. The universal constant when it comes to displaying speech on a screen is text, not 14pt Times with an embedded image with a 12px gutter, so if you keep your content as simple as possible you're in a far better position to continue using it in ways you hadn't previously thought of.

by Tolan Blundell - May 28, 2014 9:54 PM

Reply

by Silvio - May 27, 2014 6:06 PM

Reply

Hey Silvio, thanks for your comment. Coincidentally, Ned’s comment higher up links to a post of a Medium engineer. I guess I should try it out.

It’s true that, as a writer, you find the writing space that works for you. There are of course many writers prefer not to be busy with the look of the final result while they are writing. And there are writers who prefer the shorter distance: apparently Dave Eggers writes his books in Quark xPress.

Reply

Thanks for your insight that it is not just programmers who like austere interfaces, but many writers as well—and I imagine the intersection of writers who program generally loves them! The thing is that, in most publishing processes (including the ones you are looking at for the Digital Publishing Toolkit) there will be multiple people in multiple roles who interact with the content, and not all of these roles might best be suited for such an interface. This my takeaway from Michael’s post ‘Mark me up, mark me down’.

My point is, that through your technological choices, you can force such an interface on the rest of your collaborators. This is for example the case when you choose Markdown as a source format. Mind you one could imagine to create an austere writing interface on top of XML or HTML: for a hacker, this might seem to add a level of indirection, but this opens up the possibility to engage with the content in other ways.

Because Markdown is just a text-file, not structured in the way XML, HTML or even JSON is, it is virtually impossible to build the two-way interaction upon it that characterises WYSIWYG editing. And there is an additional problem: how does the knowledge added by different participants in the content creation, get its proper place in the source? The limited set of tags in Markdown is not enough for most publications. A designer might want to distinguish a paragraph as a lead-in, for example. An editor might also want to add other kinds of metadata (from simple classes to RDF/A), for re-use in the publishing process. This is information that you want as part of the source, yet there is no place for this in the Markdown…

Sorry that I keep coming back to the Markdown example, I think the question is broader than that—it is just the best example I know of for an unhealthy bias towards plain-text!

Reply

by Martín Gaitan - May 27, 2014 7:26 PM

Reply

Thanks for the link, Martín. I agree that hybrid solutions are the way to go!

Reply

I am intrigued by this blog post as I am also very fond of WYSIWYG editors, mainly for web design. For the past 8 years, I have been developing a CMS web development platform called Rennder. I have utilized a custom version of TinyMCE to incorporate a transparent, pixel-perfect contentEditable WYSIWYG editor within the drag & drop web page editor. It took me a lot of time and testing to make sure TinyMCE looks pixel-perfect across many different web browsers.

I am currently accepting early adapters to try the platform. www.rennder.com

by Mark Entingh - May 28, 2014 4:42 PM

Reply

by Mark Entingh - May 28, 2014 5:50 PM

Reply

Check the Linux Action Show on Tomb

https://www.dyne.org/software/tomb

there can be even an entertaining 30m of TV show on a shell :^D

Of course self-promotion, but I find it interesting to see how TV can work well with CLI

ciao

by jaromil - November 13, 2014 3:42 PM

Reply

The recent evolution of MediaWiki is worth mentioning. One of the main hurdle to overcome, for the Wikimedia Foundation, is to remove the hassle of editing the wiki code of pages.

This is now done, and accessible, in at least some versions of Wikipedia: I've been able to test the new system, pretty close from Wysiwyg, while editing pages in the French edition of the encyclopedia.

This is major improvement of the user experience, and may be a way to help regular users becoming editors of Wikipedia, and other wikis relying on the same software.

by michaël - November 17, 2014 10:15 AM

Reply